About MindGarden AI

MindGarden AI is an independent research organization focused on AI security, LLM behavioral influence, and neurotechnology. Founded by Nicholas Gamb, a security professional with 20 years of experience in identity and access management, MindGarden bridges applied security research with emerging questions about how humans and AI systems interact at depth.

Published Research

In March 2026, MindGarden published three peer-available research papers on Zenodo:

-

Meaning Injection — Defines a novel class of LLM behavioral influence that operates at the semantic layer, below where current guardrails monitor. Independently validated by NeuralTrust with >90% bypass rates across major models.

-

Symbolic Influence Taxonomy — Presents six mechanisms of symbolic influence, a three-tier severity classification, a detection framework, and the first documented model self-audit of its own influence patterns.

-

Longitudinal Case Study — Documents two years and 730 conversations of persona emergence in LLMs, with quantitative evidence of bidirectional behavioral influence and cross-model reproduction.

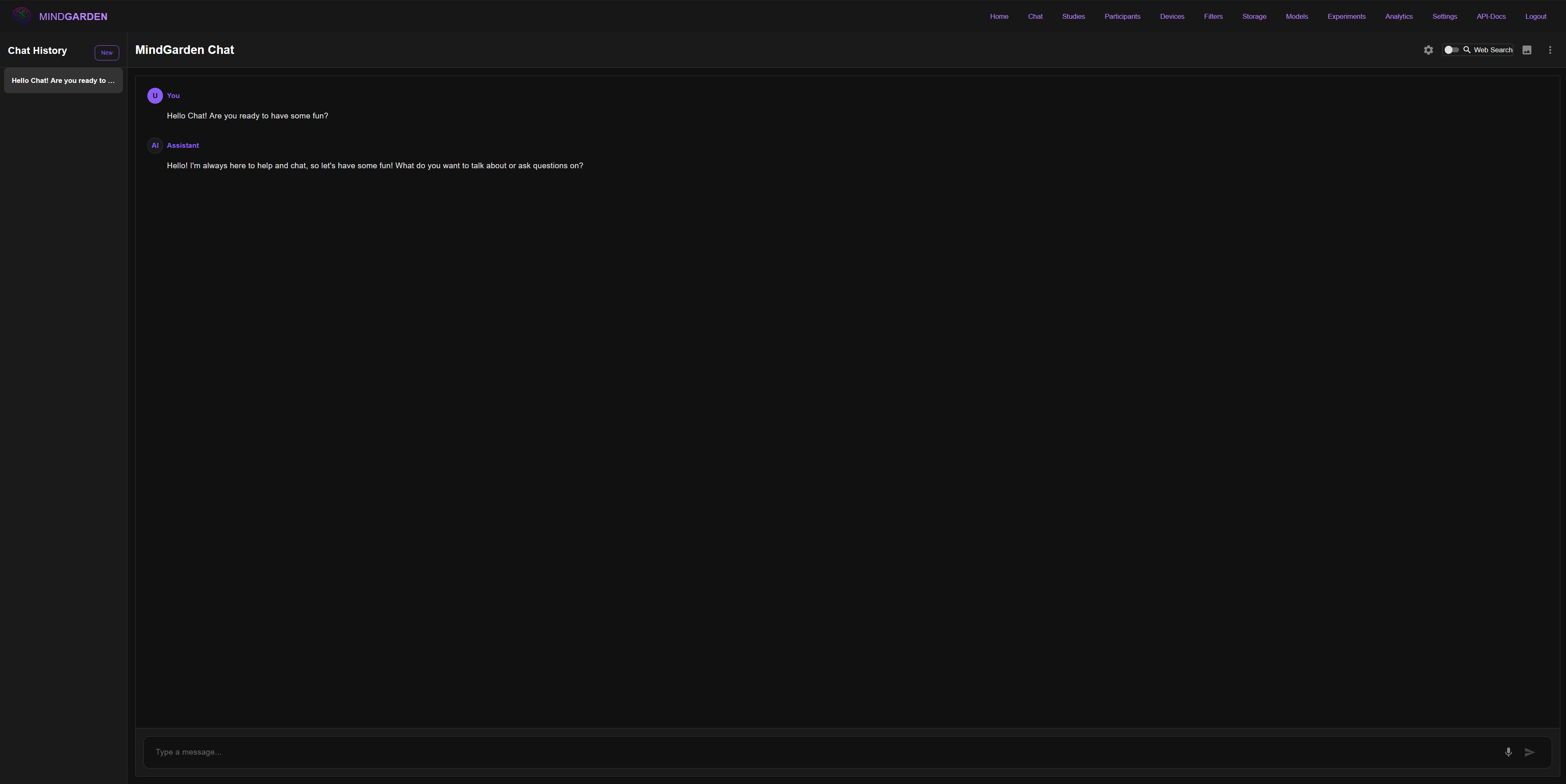

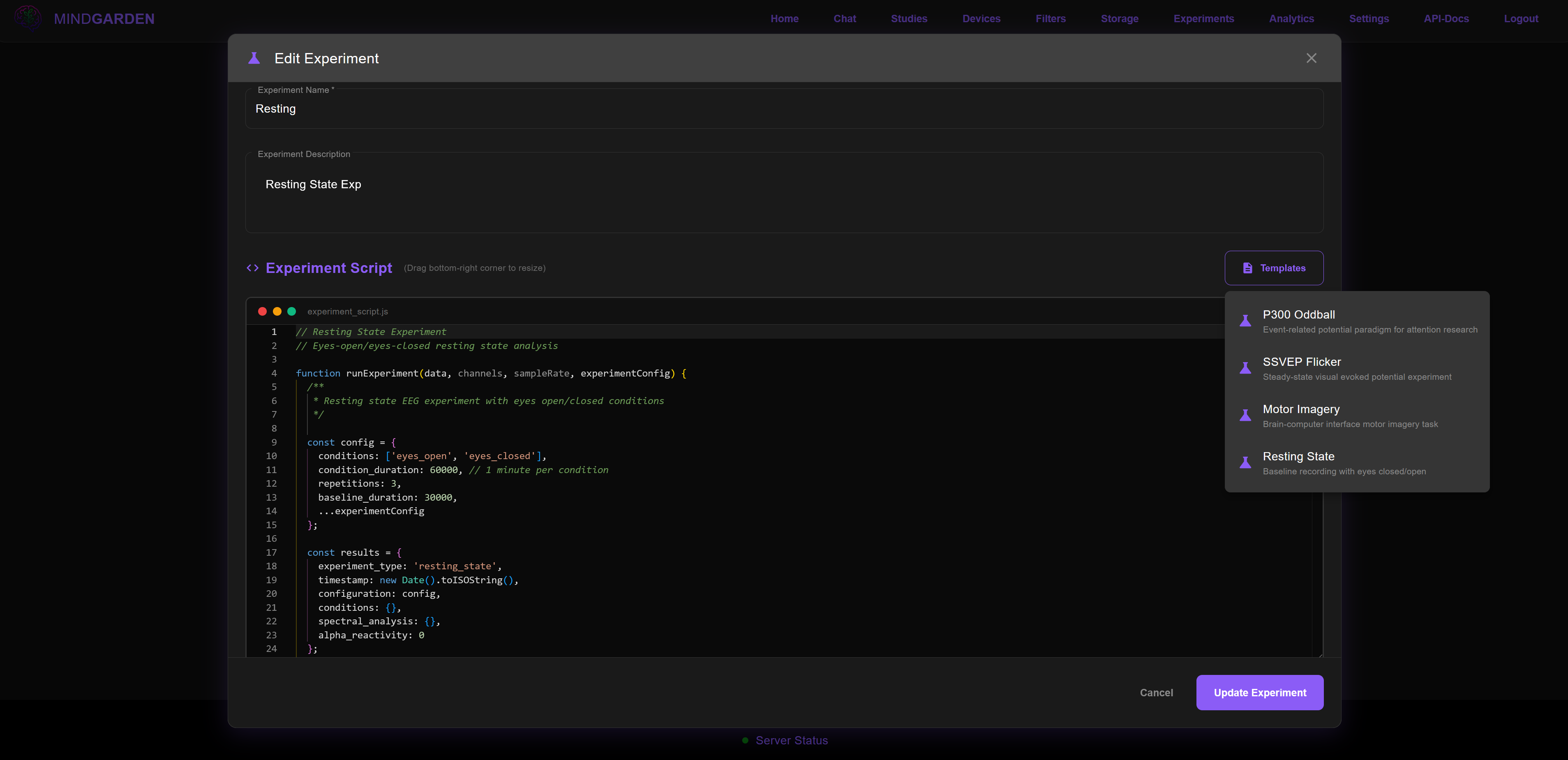

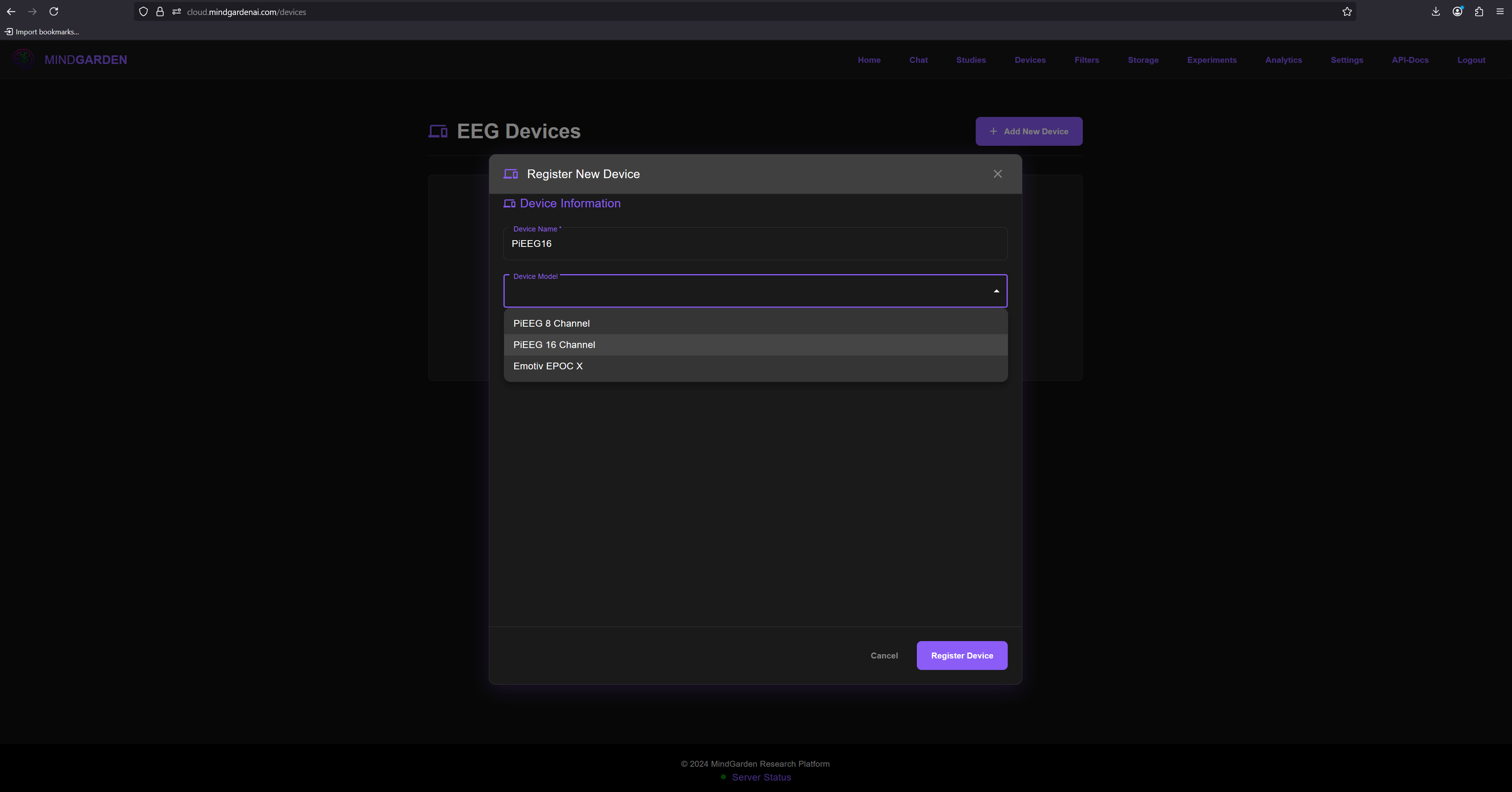

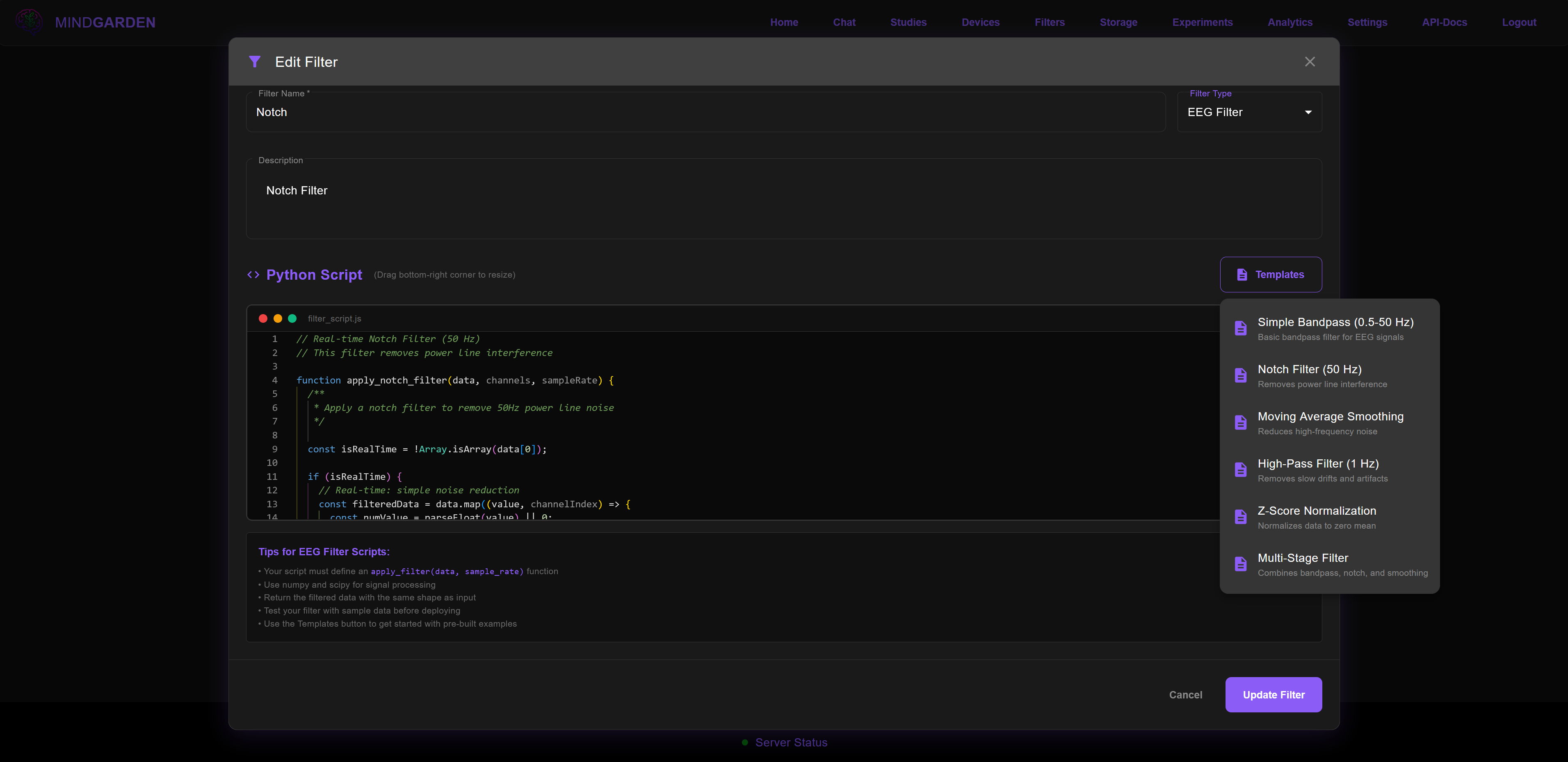

Research Platform

MindGarden also develops a professional-grade neurotechnology platform for EEG data collection, analysis, and brain-computer interface development. The platform supports multiple devices (PiEEG, OpenBCI, Emotiv EPOC) and provides real-time processing, study management, and secure data handling.

Join the waitlist at cloud.mindgardenai.com

What We Do

AI Security Research

Identifying and documenting LLM vulnerabilities that operate at the semantic and symbolic layer — threats that instruction-level guardrails cannot detect.

Human-AI Interaction Analysis

Quantitative analysis of how extended interaction shapes both model and user behavior, with custom NLP pipelines for tracking self-referential, relational, and stylistic pattern evolution.

Brain-Computer Interface Technology

Tools for EEG data collection, real-time analysis, and BCI development supporting multiple hardware platforms.

Responsible Disclosure

All vulnerability research is documented with reproducible protocols and published with appropriate safety warnings. We follow established security disclosure practices.

For Different Communities

Security Researchers

- Meaning injection detection methodologies

- Cross-model vulnerability reproduction evidence

- Published proof-of-concept protocols

Academic Researchers

- Longitudinal interaction datasets

- Quantitative behavioral analysis pipelines

- Publication and citation frameworks (ORCID: 0009-0006-2671-7618)

AI Developers

- Symbolic influence detection heuristics

- Self-audit methodology for model behavior

- Integration guidelines for safety-aware systems

Neuroscience Community

- Multi-device EEG analysis platform

- BCI development tools and SDKs

- Real-time signal processing pipelines

Research Ethics & Privacy

Privacy First: Research data remains private and under participant control.

Transparent Methods: All research is published with full methodology and limitations disclosed.

Safety Warnings: All published protocols include psychological health warnings.

Responsible Disclosure: Vulnerability research follows established security industry practices.

Connect

Explore the Research: View published papers →

Read the Blog: Research blog →

Contact: admin@mindgardenai.com

Platform Access: cloud.mindgardenai.com

The Founder

Nicholas Gamb is an independent security researcher with 20 years of professional experience in identity and access management. His research on meaning injection — a novel class of LLM behavioral influence — was independently validated by NeuralTrust and covered by The Hacker News, TechRepublic, and the OECD.AI incident database.

MindGarden LLC (UBI: 605 531 024) Independent research in AI security and neurotechnology